Cost Function (Squared error function)

Cost Functions helps us figure out how to fit the best possible straight line in our data.

Recall that m is the number of training examples (number of rows)

Parameters

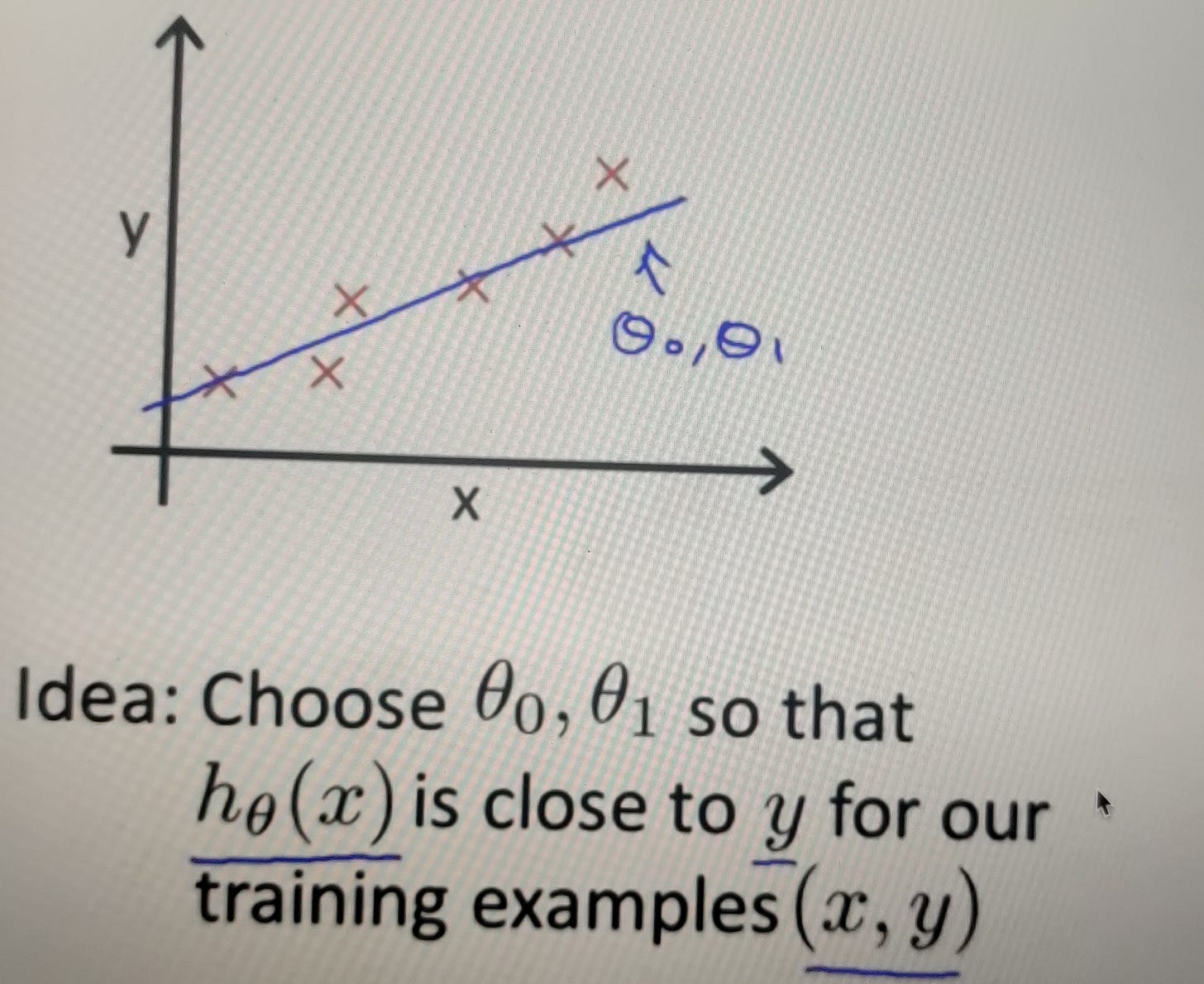

In linear regression we have a training set like the one plotted above, what we want to do is to come up with values for the parameters (theta 0 and theta 1) so that the straight line we get out of it, corresponds to a straight line that somehow fits the data well.

NOTE:

y = mx + c # equation of a line

theta 0 = c

theta 1 = m

y = h(theta0, theta 1)

Minimising Theta 0, Theta 1